Tips for preparing your resume

Disclaimer: This post reflects my personal views and not those of my employer.

In my previous post providing tips for interviewing at Google, I included the sentence “If you don’t know anyone at Google, you’ve already applied and haven’t heard back in a while, feel free to send me a note with your CV and I’ll see if there’s something I can do.”

I received a number of requests from people who had applied but never heard back. In most of these cases, I spotted issues with their resumes, which may or may not explain why they never heard back. In an effort to help others who are getting ready to apply for a job, I decided to write a new blog post with tips on how to prepare your resume for application.

Scalable methods for computing state similarity in deterministic MDPs

This post describes my paper Scalable methods for computing state similarity in deterministic MDPs, published at AAAI 2020. The code is available here.

Motivation

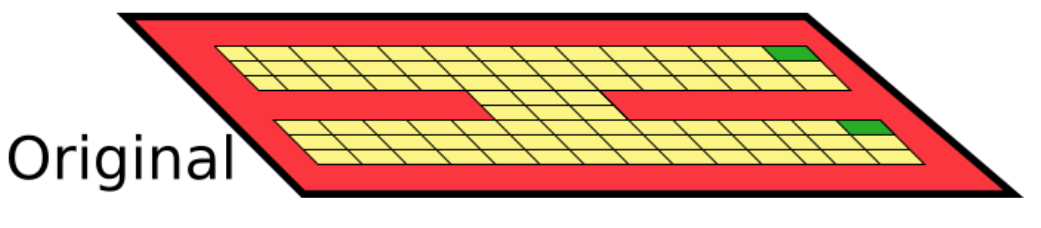

We consider distance metrics between states in an MDP. Take the following MDP, where the goal is to reach the green cells:

Physical distance betweent states?

Physical distance often fails to capture the similarity properties we’d like:

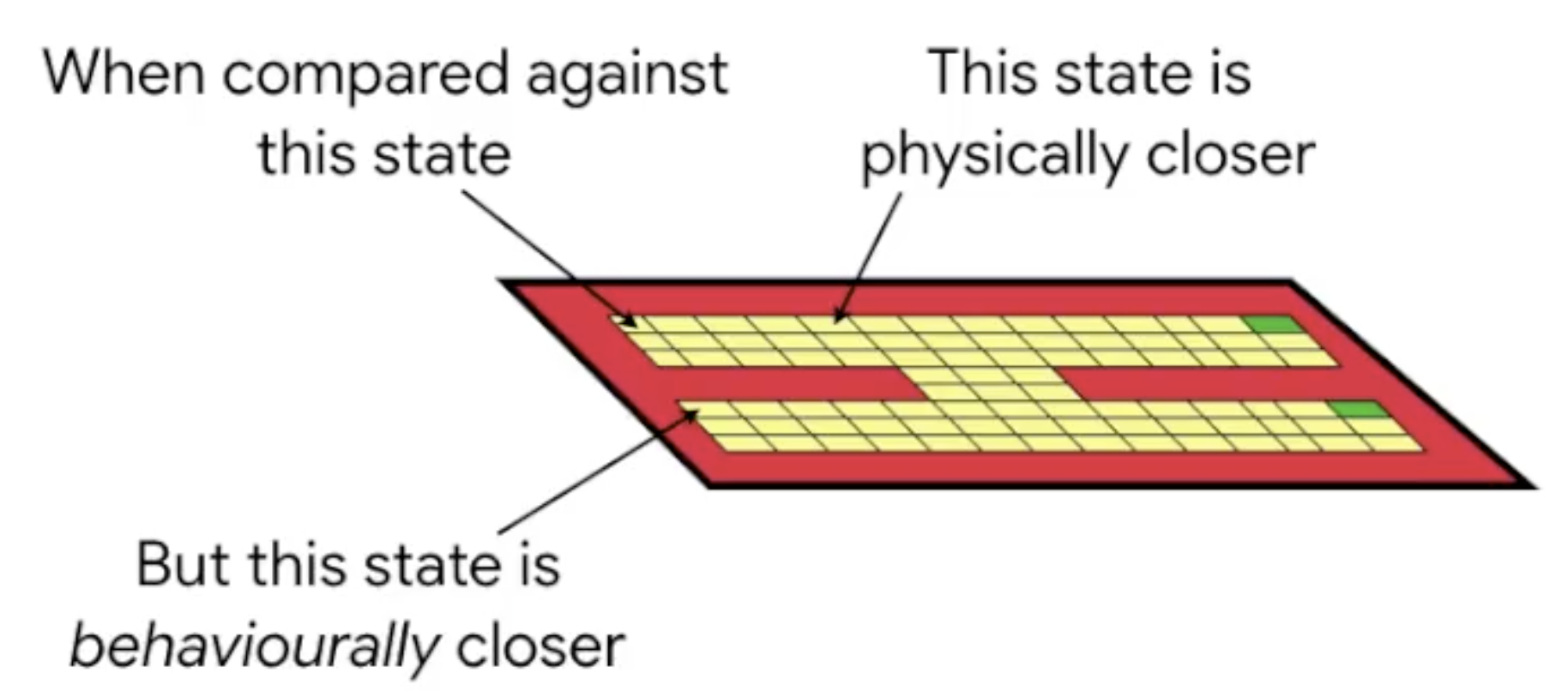

State abstractions

Now imagine we add an exact copy of these states to the MDP (think of it as an additional “floor”):

The Cost of Beauty

In “The Evolution of Beauty”, Richard O. Prum argues that many of the ornaments present in animals need not have an adaptationist purpose (as is the common held belief), but can be the result of the aesthetic choice of the females.

This web app is inspired by that idea. It creates a set of male and female Things that can mate and reproduce.

Courting

Males try to seduce females, and females select the most attractive male. Males have to catch up to the females (before they die) in order to reproduce.

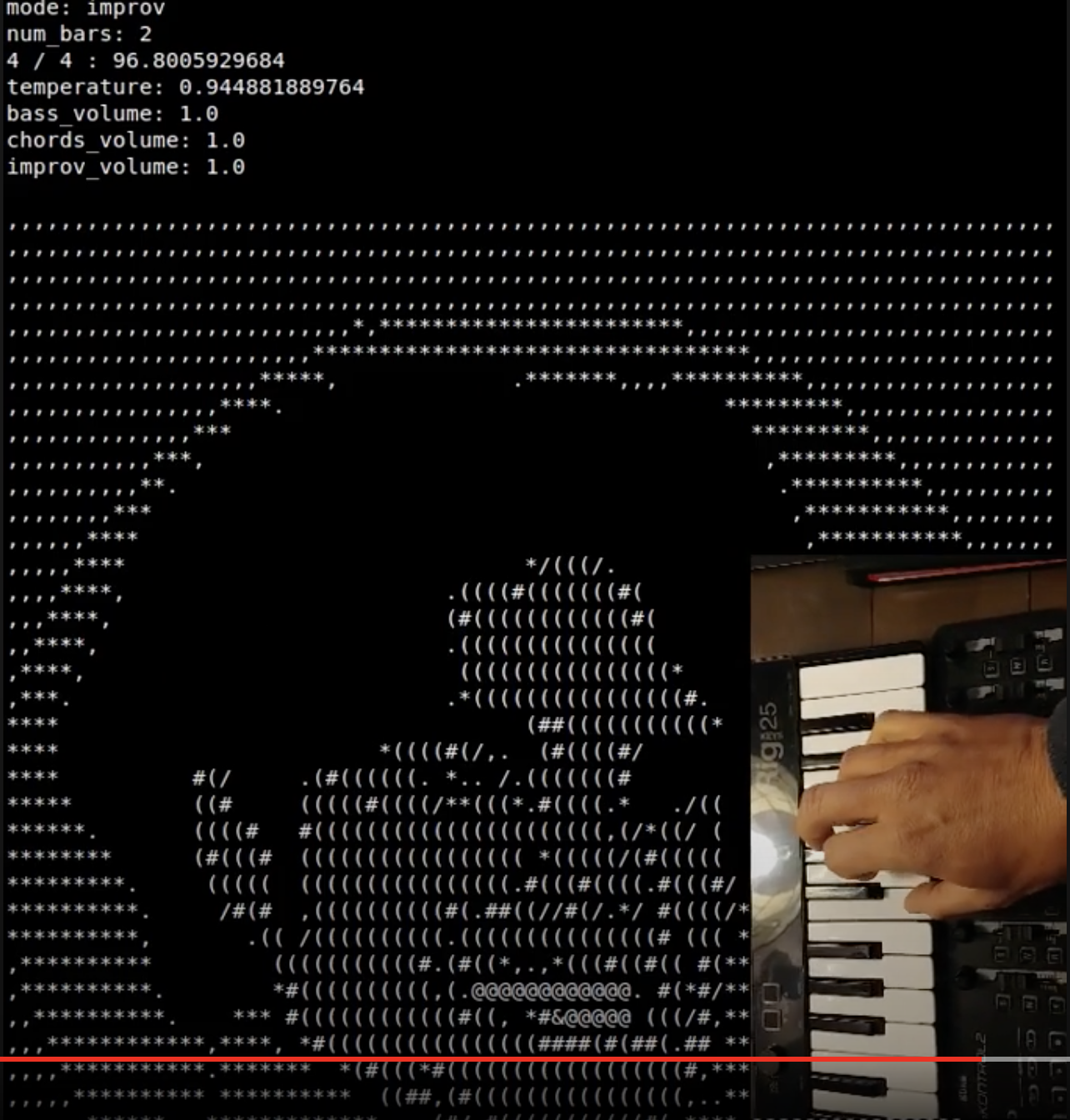

ML-Jam: Performing Structured Improvisations with Pre-trained Models

This paper, published in the International Conference on Computational Creativity, 2019, explores using pre-trained musical generative models in a collaborative setting for improvisation.

You can read more details about it in this blog post.

You can also play with it in this web app!

If you want to play with the code, it is here.

Demos

Demo video playing with the web app:

Demo video jamming over Herbie Hancock’s Chameleon:

Demo video over free improvisation:

Musical Aquarium

I created this website as an experiment to learn p5.js. It creates programmatic “music” based on the interaction oof the fish you create.

You create fish by clicking anywhere on the screen. The x-axis of the position where you click determines the pitch (taken from the D minor pentatonic scale), while the length of the click determines the size and the speed of the fish.

Whenever the fish bump into each other they “sing” and move away. Enjoy!

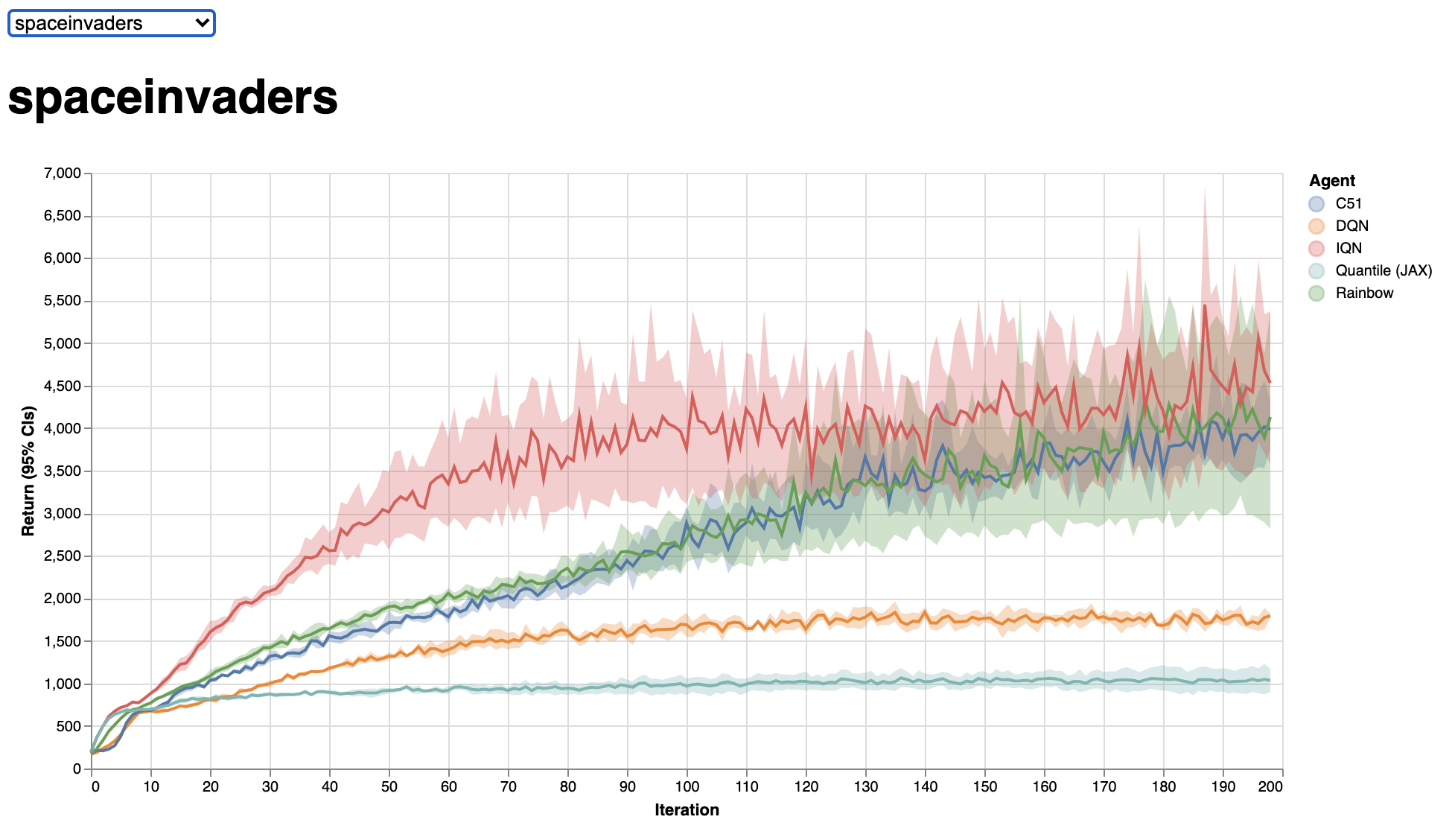

Dopamine: A framework for flexible value-based reinforcement learning research

Dopamine is a framework for flexible, value-based, reinforcement learning research. It was originally written in TensorFlow, but now all agents have been implemented in JAX.

You can read more about it in our github page and in our white paper.

We have a website where you can easily compare the performance of all the Dopamine agents, which I find really useful:

We also provide a set of Colaboratory notebooks that really help understand the framework:

JiDiJi: An Experiment in Musical Representation

I made this website to convert between music and colours. Read below for details!

About

Music can be represented in various forms: as a series of sounds, as a score, as tabs (for guitar), as a series of chord names, as MIDI, as a NoteSequence protocol buffer, and more.

I stumbled upon the Solresol language recently, as well as upon this talk by Adam Neely, and realized that you can also represent music as colours.